Edge Computing in Events: Processing Data Where It Happens

Introduction: The Latency Problem in Real-Time Events

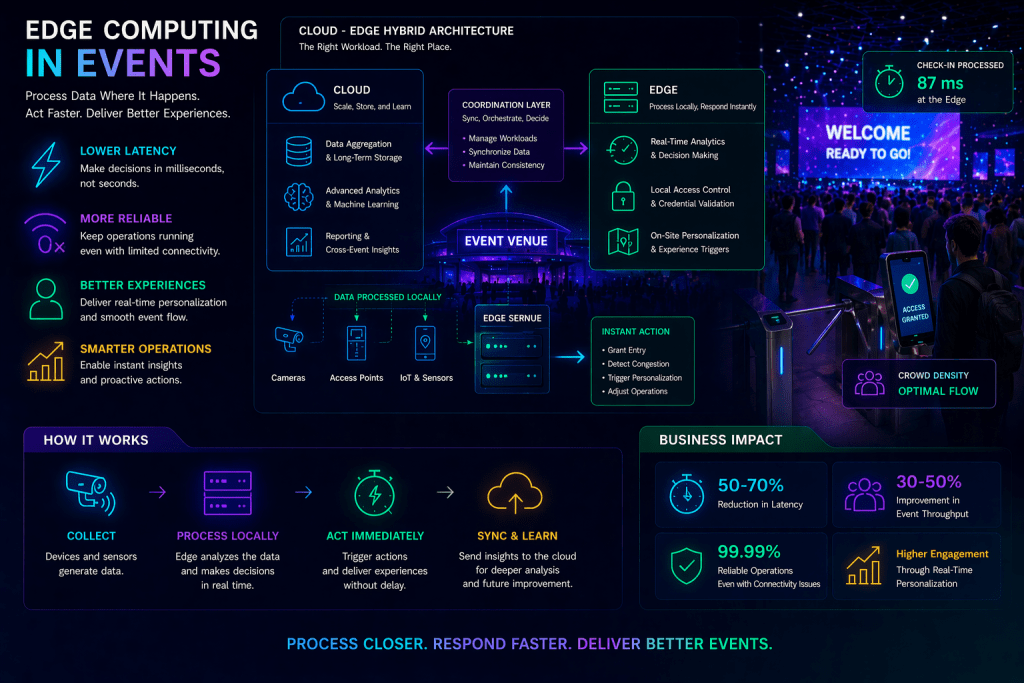

Event environments have become increasingly dependent on real-time data. From access control and personalization to crowd management and live analytics, modern event systems must process and respond to data as it is generated. However, many architectures עדיין rely heavily on centralized cloud infrastructure.

While cloud systems provide scalability and flexibility, they introduce latency. Data must travel from the event venue to remote servers, be processed, and then return with instructions. In high-density, time-sensitive environments, even small delays can degrade performance and user experience.

Edge computing addresses this limitation by shifting computation closer to the source of data. Instead of relying solely on centralized processing, event systems can analyze and act on data locally, enabling faster, more reliable operations.

Defining Edge Computing in Event Contexts

Edge computing refers to the deployment of computational resources near the data source, rather than in distant cloud environments. In events, this typically means processing data within the venue itself—on local servers, network nodes, or even on devices.

This approach is particularly valuable for applications that require low latency, high responsiveness, or continuous operation in environments with limited connectivity.

Edge computing does not replace cloud infrastructure. Instead, it complements it, creating a hybrid architecture where tasks are distributed based on their requirements.

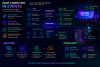

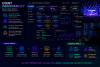

Architectural Model: Cloud-Edge Hybrid Systems

Event systems increasingly adopt hybrid architectures that combine edge and cloud capabilities.

Edge Layer: Local Processing and Immediate Response

The edge layer consists of local computational resources deployed within or near the event venue. These may include:

- On-site servers or micro data centers

- Network edge devices such as routers or gateways

- Embedded processors within devices such as cameras or sensors

This layer handles time-sensitive tasks, such as real-time analytics, device control, and immediate decision-making.

Cloud Layer: Aggregation and Long-Term Processing

The cloud layer provides centralized processing for tasks that are less time-critical. This includes:

- Data aggregation and storage

- Advanced analytics and machine learning training

- Cross-event insights and reporting

By offloading these tasks to the cloud, systems can maintain scalability and reduce the burden on local infrastructure.

Coordination Layer: Synchronization and Orchestration

A coordination layer ensures that edge and cloud systems operate cohesively. It manages data synchronization, task distribution, and consistency across environments.

This layer determines which tasks are processed locally and which are sent to the cloud, optimizing performance and resource utilization.

Use Cases in Event Environments

Edge computing enables a range of applications that depend on real-time responsiveness.

In access control, edge systems can validate credentials locally, reducing delays and ensuring smooth entry even when network connectivity is limited. This is particularly important in high-volume check-in scenarios.

For crowd management, edge devices can process data from cameras and sensors to detect congestion or anomalies. Immediate analysis מאפשר rapid intervention, improving safety and flow.

Personalization systems benefit from edge processing by delivering recommendations with minimal latency. Attendees receive timely, context-aware suggestions based on their current location and behavior.

In live production, edge computing supports low-latency video processing and streaming, enabling smoother broadcasts and real-time enhancements.

Integration with Event Technology Systems

Edge computing integrates with broader event systems to enhance performance and reliability.

Event data platforms aggregate data from edge nodes, creating a unified view while maintaining real-time responsiveness. Middleware systems coordinate communication between edge and cloud components.

Observability systems monitor edge infrastructure, ensuring that performance and reliability are maintained. Digital twins can incorporate edge data to improve simulation accuracy and responsiveness.

This integration creates a distributed system where intelligence is shared across layers.

Operational and Business Impact

The adoption of edge computing has significant operational benefits. Reduced latency improves system responsiveness, enhancing attendee experiences and operational efficiency.

Reliability is also improved. Edge systems can continue to operate even when connectivity to the cloud is disrupted, ensuring continuity of critical functions.

From a business perspective, edge computing enables more advanced use cases, such as real-time personalization and adaptive operations, which can differentiate events and improve outcomes.

It also optimizes resource usage by distributing workloads across environments, reducing the strain on centralized systems.

Challenges and Considerations

Implementing edge computing introduces new complexities. Managing distributed infrastructure requires robust coordination and monitoring.

Security is a key concern. Edge devices may be more exposed than centralized systems, requiring strong protection and management.

Data consistency must be maintained across edge and cloud layers. Synchronization mechanisms are essential to ensure that systems operate on accurate and up-to-date information.

There are also cost considerations. Deploying and maintaining edge infrastructure requires investment in hardware and management systems.

Future Outlook: Toward Distributed Event Intelligence

The evolution of edge computing is closely linked to advancements in AI and network technologies. As edge devices become more powerful, they will be able to handle increasingly complex tasks.

Integration with 5G and next-generation networks will further reduce latency and improve connectivity, enabling more seamless coordination between edge and cloud systems.

Event environments will evolve into distributed intelligence systems, where computation is dynamically allocated based on context and requirements.

This shift will support more adaptive, responsive, and scalable event operations.

Conclusion: Bringing Intelligence Closer to the Experience

Edge computing represents a critical shift in how event systems are designed and operated. By processing data closer to where it is generated, it enables faster responses, improved reliability, and more advanced capabilities.

In environments where timing and responsiveness are essential, this approach provides a significant advantage. It allows events to operate as real-time systems, capable of adapting to conditions as they unfold.

For event technology leaders, edge computing is not just an optimization—it is a foundational component of modern, distributed event architectures.