Extended Reality (XR) in Events: A Unified Framework for Spatial Experiences

Introduction: From Isolated Modalities to Integrated Spatial Computing

Event technology has explored immersive formats through Virtual Reality (VR), Augmented Reality (AR), and Mixed Reality (MR), each offering distinct capabilities. However, treating these technologies as separate layers introduces fragmentation—in design, infrastructure, and user experience.

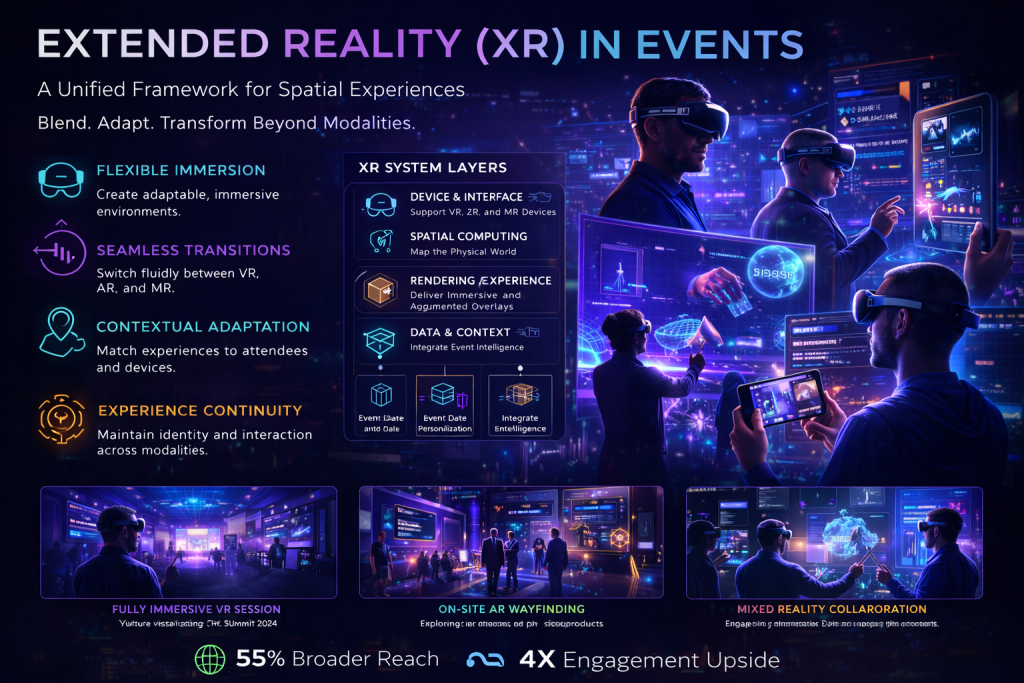

Extended Reality (XR) represents a unifying framework that encompasses VR, AR, and MR within a single spatial computing paradigm. Instead of selecting one modality, XR enables event systems to dynamically adapt experiences based on context, device capability, and user intent.

For event technology, this convergence is significant. It shifts the focus from individual technologies to a cohesive spatial experience layer that integrates seamlessly with physical environments and digital systems.

Defining XR in Event Contexts

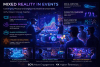

Extended Reality refers to the spectrum of immersive technologies that blend physical and digital environments. In event contexts, XR enables experiences that can transition between:

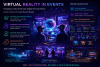

- Fully immersive virtual environments (VR)

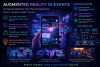

- Contextual overlays on physical spaces (AR)

- Interactive, shared environments combining both (MR)

The key characteristic is adaptability. XR systems can deliver different levels of immersion based on the scenario, without requiring entirely separate infrastructures.

This flexibility allows event organizers to design experiences that are inclusive, scalable, and context-aware.

Architectural Model: A Unified XR Stack

XR systems require an integrated architecture that supports multiple modalities while maintaining consistency.

Device and Interface Layer

XR experiences are accessed through a range of devices:

- VR headsets for immersive environments

- AR-enabled smartphones and tablets

- MR headsets for spatial interaction

The system must detect device capabilities and adapt content accordingly, ensuring consistent experiences across platforms.

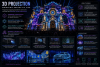

Spatial Computing Layer

At the core of XR is spatial computing, which enables systems to understand and interact with physical environments. This includes:

- Environmental mapping and spatial anchoring

- Object recognition and tracking

- Persistent spatial data storage

This layer provides the foundation for aligning digital content with physical space.

Rendering and Experience Engine

The rendering engine generates content for different modalities, adapting visuals and interactions based on context.

For example, a session may be experienced as a fully immersive environment in VR, while the same content appears as contextual overlays in AR.

This requires modular content design and efficient rendering pipelines.

Data and Context Layer

XR experiences are driven by data. Event data platforms provide inputs such as attendee profiles, schedules, and real-time conditions.

Contextual intelligence determines what content is displayed, where it appears, and how it behaves. This ensures that experiences remain relevant and personalized.

Networking and Synchronization

Multi-user experiences require real-time synchronization across devices and environments. Networking systems ensure that interactions are consistent, regardless of modality.

Low-latency communication is essential, particularly for shared experiences and live interactions.

Experience Design: Fluid Transitions Across Modalities

One of the defining capabilities of XR is the ability to transition seamlessly between different modes of experience.

An attendee might begin exploring an event through a mobile AR interface, then enter a fully immersive VR session, and later interact with MR elements on-site. The experience remains continuous, with identity, context, and interactions preserved.

Designing for XR requires thinking beyond individual experiences to create cohesive journeys. Content must be adaptable, ensuring that it remains meaningful across different levels of immersion.

This approach reduces fragmentation and enhances continuity.

Integration with Event Technology Ecosystems

XR functions as an experience layer that integrates with the broader event technology stack.

Event data platforms provide the contextual foundation, while personalization engines tailor experiences to individual users. Real-time orchestration systems coordinate XR interactions with physical and digital components.

Digital twins enhance XR by providing accurate models of environments and systems. Edge computing supports low-latency processing, ensuring responsiveness across modalities.

This integration enables XR to operate as part of a unified event system rather than an isolated feature.

Operational and Business Impact

The adoption of XR has significant implications for event design and delivery.

For attendees, it provides flexible access to experiences. Users can engage with events in ways that suit their preferences and capabilities, whether through immersive environments or lightweight overlays.

For organizers, XR enables new formats and revenue models. Experiences can be extended beyond physical venues, reaching broader audiences while maintaining engagement.

Sponsors benefit from immersive and interactive environments that support deeper engagement and storytelling.

Strategically, XR positions events as advanced digital-physical ecosystems, capable of delivering differentiated experiences.

Challenges and Considerations

Implementing XR requires addressing several challenges.

Device fragmentation is a primary concern. Supporting multiple devices and modalities introduces complexity in design and development.

Content creation must be modular and adaptable, which requires new workflows and tools.

Performance optimization is critical, particularly for real-time rendering and multi-user synchronization.

Accessibility must be considered to ensure that experiences are inclusive and not limited to users with advanced hardware.

Future Outlook: Toward Persistent XR Event Ecosystems

The evolution of XR points toward persistent spatial environments that extend across events and platforms.

Attendees may maintain continuous identities within XR ecosystems, enabling ongoing engagement and interaction. Events could become nodes within larger digital environments, rather than isolated experiences.

Advances in hardware, including lightweight wearable devices, will improve accessibility. Improvements in spatial computing and AI will enhance contextual intelligence and adaptability.

As these technologies mature, XR will become a foundational layer in event ecosystems.

Conclusion: A Unified Vision for Immersive Events

Extended Reality represents the convergence of immersive technologies into a unified framework. By integrating VR, AR, and MR, it enables flexible, adaptive experiences that align with the evolving nature of events.

This approach moves beyond isolated implementations, creating cohesive systems where physical and digital elements interact seamlessly.

For event technology leaders, XR offers a strategic opportunity to redefine how events are experienced—transforming them into interconnected, spatially intelligent environments that extend beyond traditional boundaries.