Autonomous Camera Drones in Events: Aerial Intelligence for Live Production and Operations

Introduction: Expanding the Visual and Operational Field

Event production has traditionally relied on fixed cameras, handheld rigs, and crane systems to capture and broadcast experiences. While these tools have evolved significantly, they remain constrained by physical positioning, operator limitations, and predefined coverage zones. As events scale in size and complexity, these constraints limit both creative expression and operational visibility.

Autonomous camera drones introduce a new dimension. By combining aerial mobility with onboard intelligence, they extend both the visual field and the sensing capability of event environments. Drones are no longer مجرد tools for capturing cinematic footage—they are becoming mobile, data-generating systems that integrate into the broader event technology stack.

From Remote Control to Autonomous Systems

Early drone usage in events focused on manual aerial videography. Skilled pilots controlled flight paths, framing, and movement, often under strict regulatory and safety constraints.

The integration of AI, computer vision, and onboard processing has shifted this model. Modern drones can:

- Track subjects dynamically without manual input

- Maintain stable framing using visual recognition

- Adapt flight paths based on environmental conditions

- Coordinate with other devices and systems

This evolution enables semi-autonomous and fully autonomous operation, reducing reliance on continuous human control while increasing precision and scalability.

System Architecture: Distributed Aerial Intelligence

Autonomous camera drones operate as distributed systems, combining sensing, computation, and communication.

Onboard Sensing and Perception

Drones are equipped with multiple sensors to perceive their environment:

- High-resolution RGB cameras for capture and vision processing

- Depth sensors and LiDAR for obstacle detection

- GPS and inertial measurement units (IMUs) for positioning

- Ultrasonic sensors for short-range awareness

These inputs create a real-time model of the drone’s surroundings.

Edge Processing and Computer Vision

Onboard processors handle real-time computation, enabling drones to operate independently of centralized systems.

Computer vision models support:

- Object detection (e.g., speakers, performers, crowds)

- Subject tracking and re-identification

- Scene segmentation and classification

Processing at the edge minimizes latency, allowing immediate प्रतिक्रिया to dynamic conditions.

Navigation and Control Systems

Flight control systems integrate sensor data with control algorithms to manage movement.

Capabilities include:

- Autonomous path planning

- Collision avoidance in dynamic environments

- Stabilization under varying conditions

In indoor environments, where GPS is unreliable, drones rely on SLAM (Simultaneous Localization and Mapping) to navigate accurately.

Communication and Integration

Drones communicate with ground systems and other devices through wireless networks. This enables:

- Real-time video streaming to production systems

- Coordination with other cameras or drones

- Integration with event control and orchestration platforms

Low-latency communication is essential for synchronization with live workflows.

Integration with Live Production Workflows

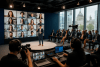

Autonomous drones are increasingly integrated into professional production environments as intelligent camera units.

Directors can define high-level objectives—such as tracking a keynote speaker or capturing audience reactions—while drones handle execution. This reduces operational complexity and enables more dynamic coverage.

In multi-camera setups, drones function as part of a coordinated system, complementing fixed and handheld cameras. Their mobility allows them to capture perspectives that would otherwise require complex rigging.

Beyond Videography: Operational Intelligence

The role of drones extends beyond visual capture into operational intelligence.

Crowd Monitoring

Aerial perspectives provide valuable insights into crowd density and movement patterns. Computer vision models can analyze these patterns in real time, identifying congestion or anomalies.

Security and Surveillance

Drones can patrol large venues, monitor restricted areas, and respond to incidents quickly. Their mobility enables coverage of areas that are difficult to monitor باستخدام fixed infrastructure.

Logistics and Coordination

In large venues, drones can assist with inspection, coordination, and situational awareness. While still emerging, these use cases highlight their potential as operational assets.

Experience Design and Audience Impact

From an attendee perspective, drones enhance both live and broadcast experiences.

Aerial footage adds cinematic quality to events, creating more engaging visual narratives. In large-scale events, this perspective helps convey scale and energy.

In some scenarios, drones become part of the experience itself—integrated into performances or synchronized with lighting and audio systems.

The challenge lies in balancing visibility and safety. Drones must enhance the experience without becoming intrusive.

Safety, Regulation, and Risk Management

Operating drones in event environments requires strict adherence to safety and regulatory standards.

Key considerations include:

- Geofencing to restrict flight areas

- Redundant systems for fail-safe operation

- Collision avoidance mechanisms

- Compliance with aviation regulations

Risk management is critical, particularly in crowded environments. Systems must be designed to handle failures without compromising safety.

Technical Challenges

Despite their capabilities, autonomous drones face several constraints.

Indoor navigation remains complex due to limited GPS availability. SLAM systems provide solutions but require precise calibration.

Battery life limits flight duration, necessitating rotation strategies or multiple drones for continuous coverage.

Environmental factors—such as lighting conditions and obstacles—can impact sensor performance and model accuracy.

Integration with existing systems adds complexity, requiring robust APIs and synchronization mechanisms.

Future Outlook: Swarm Systems and Autonomous Coverage

The next phase of drone technology in events is likely to involve swarm intelligence—multiple drones operating collaboratively.

Swarm systems can provide:

- Redundant coverage

- Coordinated multi-angle capture

- Distributed sensing across large areas

Advances in AI will enable drones to understand context more deeply, making decisions based on event dynamics rather than predefined rules.

Improvements in battery technology, edge computing, and network infrastructure will further expand capabilities.

Conclusion: Aerial Systems as Event Infrastructure

Autonomous camera drones are evolving from specialized tools into integral components of event infrastructure. They extend the capabilities of both production and operations, providing mobility, intelligence, and real-time responsiveness.

As event systems become more interconnected, drones will play a central role in sensing, capturing, and interpreting event environments.

For event technology leaders, the focus is no longer just on using drones for footage—but on integrating them into a broader architecture where aerial intelligence contributes to how events are experienced and managed.